It's great that companies are using the scientific method for growth. What used to be “billboards and a prayer,” has evolved into repeatable and measurable systems for growth.

But, many teams don’t get as much value out of the scientific method as they could. Even worse, they often apply it incorrectly which produces misleading results.

By now, most practitioners responsible for growth know they should be using the scientific method to guide their efforts, but until recently, many hadn’t actively used it since 6th grade science class. (Luckily, there are some useful resources out there for ramping up on building processes for growth.)

But if being effective in your job is dependent on using the scientific method, you need to study how the best teams in the world use it. And the best funded and most experienced teams that use the scientific method aren't in tech, they're in science. Unlike the growth community, which is still in its first decade of existence, the science community has been refining the scientific method for thousands of years.

The Scientific Method From the POV of This Scientist Turned Growth Practitioner

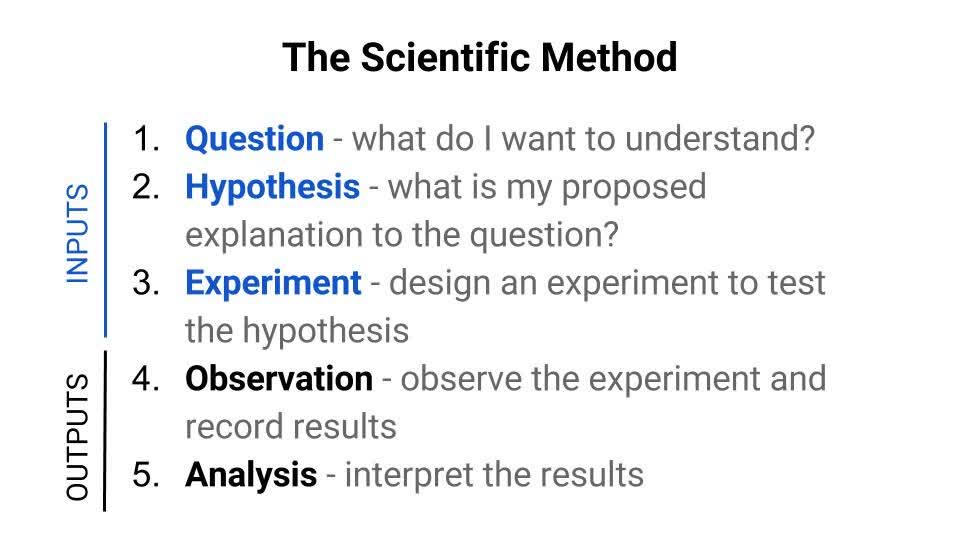

Let’s start with a quick recap of the steps of the scientific method:

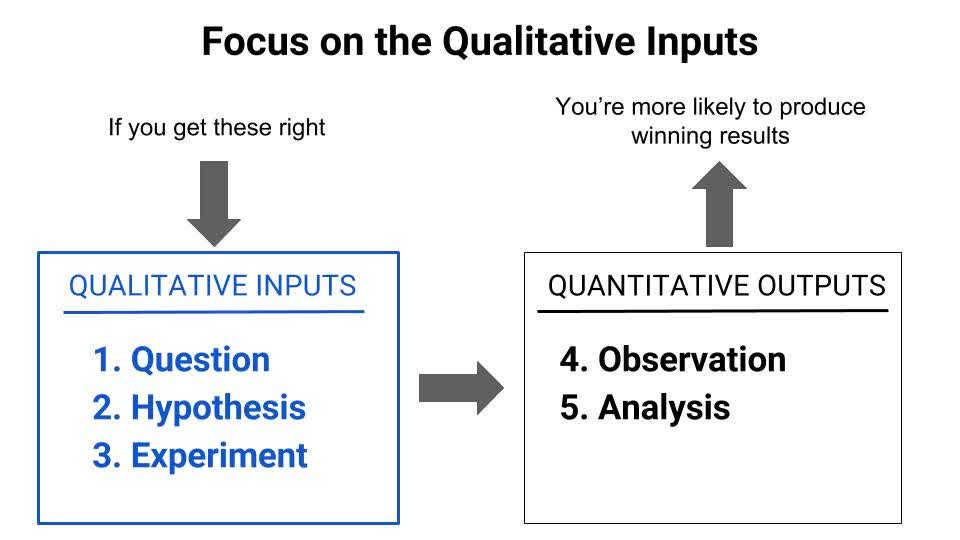

I break the method into five steps, categorizing steps 1-3 as the qualitative inputs to the method, and steps 4-5 as the quantitative outputs.

What I’ve learned from working in neuroscience labs, is that the qualitative inputs - question, hypothesis, and experiment design - are the most important steps of the process. If you get these steps right, you increase your chances of running successful experiments that produce valid results and actionable insights.

Any competent data analyst on a growth team (or lab tech in a research org) knows which stats tests and tools to use to collect and analyze results.

So, what separates the good growth teams (and research labs) from the great ones?

It’s the quality of the questions they ask, the hypotheses they come up with, and how they construct their experiments.

In this post, I'll walk you through 3 ways you can leverage learnings from the science world to tackle the qualitative inputs to the scientific method more effectively:

Question - Ask “outside” questions to reduce bias in your process

Hypothesis - Relentlessly seek the “minimum” in your MVTs to find a strong hypothesis faster

Experiment - Control and blind your experiments to produce accurate results and reliable insights

If you evolve how your growth team approaches these three areas, you’ll drive breakthroughs in your growth processes so you can launch more successful experiments on a faster timeline.

Ask “Outside” Questions

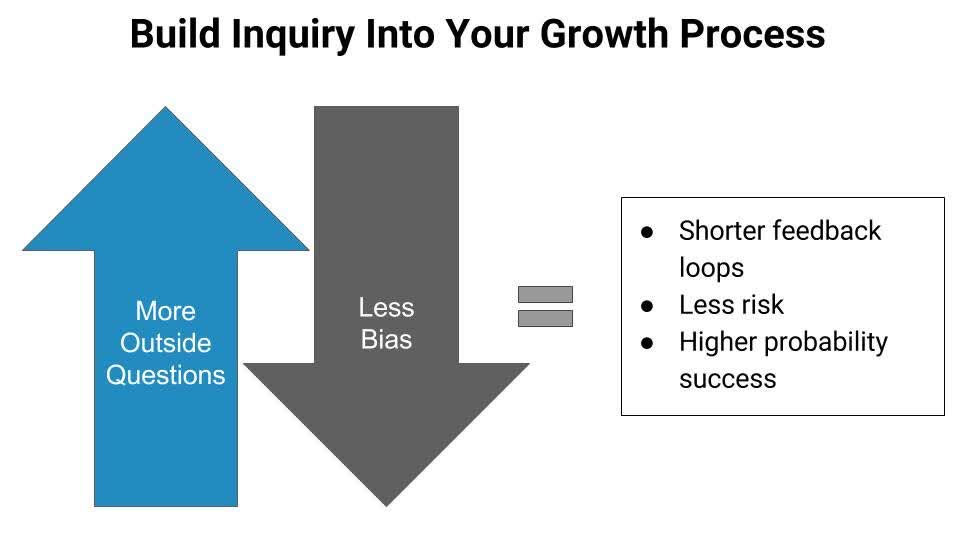

The quality of the questions we ask drives the quality of insights our growth process produces.

This is why it’s critical to build questioning into our growth processes, rather than leaving it to chance.

Yet, many of us still aren’t that good at identifying the questions that will drive our businesses forward. Sometimes we rely on gut, whatever’s top of mind, or a directive from leadership to define the questions that will steer our growth strategy.

It's hard to see our own work objectively, which makes it even harder to ask unbiased questions that pressure test our assumptions. Established opinions and preferences limit our scope for effective questioning, especially when we’re trying to move quickly.

To neutralize the negative impact of their biases, every lab I’ve worked with integrated regular “outside” questioning into their system. They actively solicited external perspectives, which allowed them to:

Shorten their feedback loops

Mitigate the risks of going off track

Increase the probability of running successful experiments

The most powerful way they’ve built questioning into their process is with the weekly “pizza review.”

What Is the Weekly Pizza Review?

The pizza review is a weekly meeting scheduled with other labs. The meetings are usually an hour, with one or two presentations from each lab. It’s a tit-for-tat exchange in which both labs ask each other unbiased but well-informed questions about their ongoing projects.

They’re called “pizza reviews” because thousands of years of research have determined that the #1 way to get underpaid scientists into a room is by offering free lunch.

We’ve all heard the adage a million and one times - “your network is everything.” So, we go to the occasional networking event or conference, maybe make a new connection or two, and listen to growth leaders from Slack, Facebook, and Uber share their latest learnings. Networking... check!

Not so fast - this type of networking is superficial. It doesn’t give you the opportunity to go deep on your work with outside professionals who can pressure test your process and provide feedback.

Though the pizza review sounds informal, it’s not. It’s foundational to how the best labs operate and is baked deeply into the culture of experimental inquiry in the science world. It isn’t about meeting up with a handful of fellow practitioners once in a while, eating a few slices of pizza, and trading high-level tactics.

It’s about regular, scheduled, in-depth, unbiased, and targeted inquiry to improve the questions we ask, the hypotheses we come up with and the experiments we design. All of the best labs do it.

When I made the transition to growth, I was surprised to learn that, as a community, we don’t have a similar practice. It’s a big hole in our processes as practitioners of the scientific method. When I went through the Reforge Growth Series, I often heard participants say they were hoping to meet other growth professionals and learn how they run their teams. In science, I didn't have to apply to a program to do this. When I joined my first lab, I had a meeting with outside researchers my first week.

Let’s walk through a few learnings on how to make it easier and more actionable to create our own versions of the pizza review.

Find People Who Are Familiar With Your Field but Not Direct Competitors

While I was in the multiple sclerosis lab at Hopkins, our most fruitful pizza review was with an ALS group. Both diseases have autoimmune components, so we spoke the same language. But MS and ALS are also fundamentally different diseases, which meant we didn’t have to worry about stealing each other’s spots in the top journals.

Similarly, while at Feastly, I have set up regular lunch meetings with growth or product people working at food companies or marketplace companies - but never both. The goal is to solicit questions that are well-informed, but still lend fresh perspectives.

There are lots of ways to find these people:

Tap into existing networks of growth practitioners via companies like Reforge, Growthhackers, or Tradecraft.

Search for growth practitioners on LinkedIn at companies you admire. I have yet to be turned down on a cold reachout. People don’t like being sold to, but they love being asked for advice.

Build relationships with product, marketing, sales, or other teams within your company. In addition to getting fresh perspectives on your ideas, it will help bring about a growth oriented culture.

Launch a small growth mastermind group with practitioners from various companies - meet regularly and allow members to present projects for feedback and questioning.

If You’re Really Concerned About Keeping Your Data Private, Use Case Studies or Literature at Your Pizza Reviews

We did this occasionally in the lab if we were concerned about a major result leaking too early. In these cases, we’d pick analogous papers that had been published recently by other labs and present those in place of our own work. We’d write down all of the questions we received about the paper (people would really let loose in these cases!) and take them back to private meetings, where we’d identify overlap with our own projects.

Relentlessly Seek the “Minimum” in Your MVTs

Whether in research or growth, great teams seek out ways to maximize the percentage of their projects that produce positive results. Once they’ve incorporated unbiased questioning into their process, the next step is to run better minimum viable tests (MVTs) so they can identify where they do and don’t want to invest.

This is an especially dire concern in the science world because projects in medical research require enormous investments - all of the projects I led at Hopkins or UCSF cost tens of millions of dollars. The ability to find “validating data,” is a skill researchers (and their bosses!) value above nearly anything else.

There are a handful of learnings I’ve brought from my time in the lab on how to collect validating data to inform better hypotheses. Let’s walk through them below.

Start “In Vitro”

In vitro literally means “in the glass” and is used to describe experiments that are conducted entirely in test tubes. Eventually, all medicines must go through in vivo experimentation to be tested in live animals, before going on to save lives.

Yet, virtually every major in vivo project starts in a tube or petri dish because in vitro experiments give you complete control over the testing environment, and are far less expensive.

Right now you might be saying, “I know, I know, build an MVP before going all-in. This is old news!” But do you consistently follow that advice?

Under the pressure of aggressive growth targets from leadership, teams often get carried away without even realizing it, forgetting the “minimum” part of their MVT or MVP.

Oftentimes, they end up with expensive failed experiments, in terms of resources and time, that could have produced the same results in days or weeks, instead of months.

For example, a recent company I spoke with thought introducing a subscription model would be a huge growth opportunity. Instead of kicking off their inquiry with an “in vitro” test, they jumped directly to “in vivo.”

They changed the product and payment system, onboarded existing and new users, and after months of investment from product, design, engineering and marketing, they realized their customers just didn’t want a subscription.

A one-day or one-week in vitro test using a landing page builder to mockup a subscription pricing page, driving traffic to it via ads, and measuring conversion rates against current pricing may have quickly revealed that a subscription pricing model wasn’t appealing to their customer base.

However you do it, the point is to use an in vitro test to get validation that an in vivo test is worth the additional resources. Tools like Unbounce, Optimizely, and paid advertising make these kinds of in vitro tests easy and fast. And since you aren’t dealing with existing users who were acquired through different channels, had varying CACs, and have different lifetimes, the control is far higher.

One of my favorite mentors, Anantha Katragadda, told me when I was starting out in growth that if I couldn’t think of a way to fake a product experience in order to get validating data, I simply wasn’t trying hard enough.

Curate Your Own Journal of Failed Projects

One of the major challenges in science is that there is no journal of failed projects because only successful projects are published.

Let’s say a highly influential paper comes out with an obvious next step. Over the course of the next 10 years, 50 different scientists may say “Aha! I can’t believe nobody has published that no-brainer follow-up study yet. I will devote my own time and resources to it.”

Unfortunately, there may have been a flaw in the initial paper or some other reason that the no-brainer follow-up just won’t work. Fifty labs have now wasted valuable resources - an unfortunate result for the scientific community.

The best research labs approach this problem by seeking out negative results. They spend as much time asking for and sharing failed results as they do successful ones.

I’ve been in the unfortunate position where my team ran a test that another team in the organization had already run unsuccessfully. Ever since, I’ve made sure that every major project is shared even if the results aren’t favorable.

Sharing and collecting negative results isn’t fun, so it’s only going to happen if you:

Bake it into your process

Support open sharing of failed experiments within your company culture

If you don’t do both of these things, failed projects will be swept under the rug by whomever is working on them, only to resurface later as wasted time and resources when an unsuspecting team member runs a similar experiment.

Here’s how I keep track of failed projects with the help of my growth team at Feastly:

Build a project pipeline that requires analysis and sharing before any project can move to “complete.”

Whenever we meet with outside colleagues, we ask for one thing that’s worked recently, as well as one thing that hasn’t.

We build acceptance of and learning from failed experiments into our culture. Growth teams are optimized for learning, so finding negative results isn’t a bad thing because it facilitates learning. We celebrate the learnings that result from failed experiments constantly. For us, an automated celebration message is sent to Slack anytime a project finds positive results OR negative ones, and we’re diligent about measuring individual performance independent of the ratio between positive and negative results.

Control & Blind Your Experiments

Growth teams optimize for speed, which often makes running clean projects harder, while the culture of “move fast and break things” is often used to justify taking liberties on controlled design, proper stats, or both.

Given how projects become intertwined, a misinterpreted or poorly designed project can unravel months of work or set teams up to chase losing opportunities. Uncontrolled results can become building blocks for resource**-**intensive follow-ups, with the flawed data only becoming apparent as the follow-ups miss their mark.

To publish a paper, every result must disprove the null hypothesis.

This means that you prove, with statistical significance, that the result you are seeing is not due to random chance or biased observation. Both of these premises are easy to miss, so here’s are a few straightforward tips to help you design sound experiments.

Understand How to Properly Control Your Experiments

Placebo controls are not just for medical research.

Let’s say you launch an ad or email campaign retargeting users who purchased and later churned with copy around “missing out on what everyone is talking about.”

Your hypothesis is that FOMO is an effective strategy to increase resurrection rates.

Having a control group that doesn’t get the ads or emails is not enough to validate your hypothesis, even if the results are outstanding. You need a “placebo” group that controls for both independent variables so that your groups get the same frequency of ads or emails, but with different copy, to isolate the impact of the copy.

For every experiment, make sure you control for every independent variable in your hypothesis.

Understand How to Properly Blind Your Experiments

Any project that relies on qualitative observation should be blinded.

Let’s say you’re watching user sessions to compare how high LTV users interact with a feature compared to low LTV users. At the end of the session, you give the user a grade from 0-10, 0 indicating they didn’t understand how to use the feature and 10 indicating they easily understood it.

Your hypothesis is that low LTV users don’t understand how to use the feature.

If you view 10 sessions from high LTV users, and then view 10 from low LTV users, your results will be invalid. Since you’re coming in with a hypothesis, you’re far more likely to perceive things that support your ideas.

The correct way to conduct this test would be to have a colleague assign a number to all 20 videos and then send them to you. You record the results and then send them back. They then “unblind” the data by noting which videos were of high LTV users and which weren’t.

When you’re designing every experiment, verify that human bias won’t skew the observation of the data.

Be Diligent About Statistics

This should go without saying, but in growth at early stage companies I estimate that only 20% of results are passed through the appropriate statistical tests. I’ve found that people often think that stats are used by nerds who are too cautious and don’t want to move quickly, but the opposite is true.

Stats tell you how much risk you’re taking on by accepting the results as true, which is a powerful data point if you’re moving at hyperspeed and iterating rapidly.

If you want to move more quickly you can simply increase the minimum P value on your tests.

Medical journals require a P value of .05, which means that the results should accurately disprove the null hypothesis 95% of the time. If you’re more risk tolerant and want to move faster on a particular project shoot for 85 or 90%. You’ll be wrong more often but it will take far less data to reach a decision.

Conclusion

As a field, growth is still in its infancy. While it’s advancing the approaches we take to grow companies, we still have quite a bit of refinement to do on our growth processes.

Because in the science world the scientific method it has been adapted, evolved and optimized to produce better outcomes, we need to leverage what the science community has already learned.

No one wants to reinvent the wheel! The same principles that have worked for science can be applied to running rapid iterative growth to produce more successful experiments on a faster timeline.